使用ganglia監(jiān)控hadoop及hbase集群

來源:程序員人生 發(fā)布時間:2015-03-23 08:27:05 閱讀次數(shù):4835次

介紹性內(nèi)容來自:http://www.uml.org.cn/sjjm/201305171.asp

1、Ganglia簡介

Ganglia 是 UC Berkeley 發(fā)起的1個開源監(jiān)視項目,設(shè)計用于丈量數(shù)以千計的節(jié)點。每臺計算機都運行1個搜集和發(fā)送度量數(shù)據(jù)(如處理器速度、內(nèi)存使用量等)的名為 gmond 的守護進程。它將從操作系統(tǒng)和指定主機中搜集。接收所有度量數(shù)據(jù)的主機可以顯示這些數(shù)據(jù)并且可以將這些數(shù)據(jù)的精簡表單傳遞到層次結(jié)構(gòu)中。正由于有這類層次結(jié)構(gòu)模式,才使得 Ganglia 可以實現(xiàn)良好的擴大。gmond 帶來的系統(tǒng)負載非常少,這使得它成為在集群中各臺計算機上運行的1段代碼,而不會影響用戶性能

1.1 Ganglia組件

Ganglia 監(jiān)控套件包括3個主要部份:gmond,gmetad,和網(wǎng)頁接口,通常被稱為ganglia-web。

Gmond :是1個守護進程,他運行在每個需要監(jiān)測的節(jié)點上,搜集監(jiān)測統(tǒng)計,發(fā)送和接受在同1個組播或單播通道上的統(tǒng)計信息 如果他是1個發(fā)送者(mute=no)他會搜集基本指標(biāo),比如系統(tǒng)負載(load_one),CPU利用率。他同時也會發(fā)送用戶通過添加C/Python模塊來自定義的指標(biāo)。 如果他是1個接收者(deaf=no)他集聚合所有從別的主機上發(fā)來的指標(biāo),并把它們都保存在內(nèi)存緩沖區(qū)中。

Gmetad:也是1個守護進程,他定期檢查gmonds,從那里拉取數(shù)據(jù),并將他們的指標(biāo)存儲在RRD存儲引擎中。他可以查詢多個集群并聚合指標(biāo)。他也被用于生成用戶界面的web前端。

Ganglia-web :顧名思義,他應(yīng)當(dāng)安裝在有g(shù)metad運行的機器上,以便讀取RRD文件。 集群是主機和度量數(shù)據(jù)的邏輯分組,比如數(shù)據(jù)庫服務(wù)器,網(wǎng)頁服務(wù)器,生產(chǎn),測試,QA等,他們都是完全分開的,你需要為每一個集群運行單獨的gmond實例。

1般來講每一個集群需要1個接收的gmond,每一個網(wǎng)站需要1個gmetad。

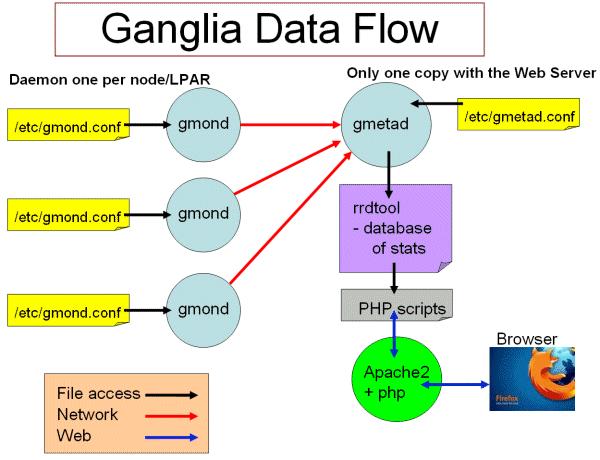

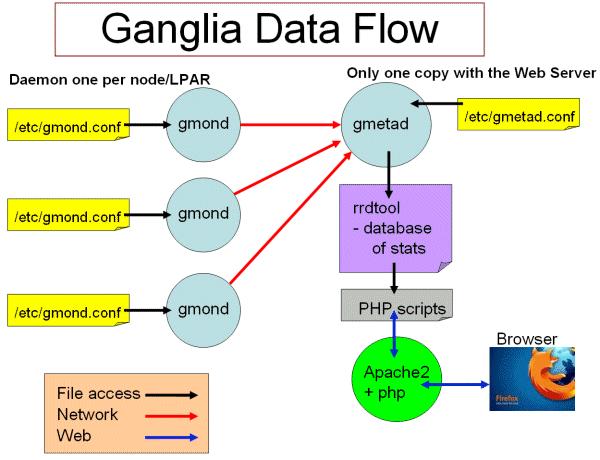

圖1 ganglia工作流

Ganglia工作流如圖1所示:

左側(cè)是運行在各個節(jié)點上的gmond進程,這個進程的配置只由節(jié)點上/etc/gmond.conf的文件決定。所以,在各個監(jiān)視節(jié)點上都需要安裝和配置該文件。

右上角是更加負責(zé)的中心機(通常是這個集群中的1臺,也能夠不是)。在這個臺機器上運行這著gmetad進程,搜集來自各個節(jié)點上的信息并存儲在RRDtool上,該進程的配置只由/etc/gmetad.conf決定。

右下角顯示了關(guān)于網(wǎng)頁方面的1些信息。我們的閱讀網(wǎng)站時調(diào)用php腳本,從RRDTool數(shù)據(jù)庫中抓取信息,動態(tài)的生成各類圖表。

1.2 Ganglia運行模式(單播與多播)

Ganglia的搜集數(shù)據(jù)工作可以工作在單播(unicast)或多播(multicast)模式下,默許為多播模式。

單播:發(fā)送自己搜集到的監(jiān)控數(shù)據(jù)到特定的1臺或幾臺機器上,可以跨網(wǎng)段。

多播:發(fā)送自己搜集到的監(jiān)控數(shù)據(jù)到同1網(wǎng)段內(nèi)所有的機器上,同時搜集同1網(wǎng)段內(nèi)的所有機器發(fā)送過來的監(jiān)控數(shù)據(jù)。由于是以廣播包的情勢發(fā)送,因此需要同1網(wǎng)段內(nèi)。但同1網(wǎng)段內(nèi),又可以定義不同的發(fā)送通道。

2、安裝ganglia

1、拓撲說明

3臺主機,分別為:

10.171.29.191 master

10.171.94.155 slave1

10.251.0.197 slave3

其中master將gmeta及web,3臺機都作gmon

以下步驟均使用root用戶履行

2、master上安裝gmeta及web

yum install ganglia-web.x86_64

yum install ganglia-gmetad.x86_64

3、在3臺機上都撫慰gmond

yum install ganglia-gmond.x86_64

4、在3臺機器上配置/etc/ganglia/gmond.conf,修改以下內(nèi)容:

udp_send_channel {

#bind_hostname = yes # Highly recommended, soon to be default.

# This option tells gmond to use a source address

# that resolves to the machine's hostname. Without

# this, the metrics may appear to come from any

# interface and the DNS names associated with

# those IPs will be used to create the RRDs.

mcast_join = 10.171.29.191

port = 8649

ttl = 1

}

/* You can specify as many udp_recv_channels as you like as well. */

udp_recv_channel {

#mcast_join = 239.2.11.71

port = 8649

#bind = 239.2.11.71

}

行將默許的多播地址改成master地址,將udp_recv_channel 的2個IP注釋掉。

5、在master上修改/etc/ganglia/gmetad.conf

修改data_source,改成:

data_source "my cluster” 10.171.29.191

6、ln -s /usr/share/ganglia /var/www/ganglia

若有問題,可以將/usr/share/ganglia的內(nèi)容直接復(fù)制到/var/www/ganglia

7、修改/etc/httpd/conf.d/ganglia.conf,改成:

#

# Ganglia monitoring system php web frontend

#

Alias /ganglia /usr/share/ganglia

<Location /ganglia>

Order deny,allow

Allow from all

Allow from 127.0.0.1

Allow from ::1

# Allow from .example.com

</Location>

行將 Deny from all 改成 Allow from all,否則在頁面訪問時有權(quán)限問題。

8、啟動

service gmetad start

service gmond start

/usr/sbin/apachectl start

9、從頁面上訪問

http://ip/ganglia

1些注意問題:

1、gmetad搜集到的信息被放到/var/lib/ganglia/rrds/

2、可以通過以下命令檢查是不是有數(shù)據(jù)在傳輸

tcpdump port 8649

3、配置hadoop與hbase

1、配置hadoop

hadoop-metrics2.properties

# syntax: [prefix].[source|sink|jmx].[instance].[options]

# See package.html for org.apache.hadoop.metrics2 for details

*.sink.file.class=org.apache.hadoop.metrics2.sink.FileSink

#namenode.sink.file.filename=namenode-metrics.out

#datanode.sink.file.filename=datanode-metrics.out

#jobtracker.sink.file.filename=jobtracker-metrics.out

#tasktracker.sink.file.filename=tasktracker-metrics.out

#maptask.sink.file.filename=maptask-metrics.out

#reducetask.sink.file.filename=reducetask-metrics.out

# Below are for sending metrics to Ganglia

#

# for Ganglia 3.0 support

# *.sink.ganglia.class=org.apache.hadoop.metrics2.sink.ganglia.GangliaSink30

#

# for Ganglia 3.1 support

*.sink.ganglia.class=org.apache.hadoop.metrics2.sink.ganglia.GangliaSink31

*.sink.ganglia.period=10

# default for supportsparse is false

*.sink.ganglia.supportsparse=true

*.sink.ganglia.slope=jvm.metrics.gcCount=zero,jvm.metrics.memHeapUsedM=both

*.sink.ganglia.dmax=jvm.metrics.threadsBlocked=70,jvm.metrics.memHeapUsedM=40

menode.sink.ganglia.servers=10.171.29.191:8649

datanode.sink.ganglia.servers=10.171.29.191:8649

jobtracker.sink.ganglia.servers=10.171.29.191:8649

tasktracker.sink.ganglia.servers=10.171.29.191:8649

maptask.sink.ganglia.servers=10.171.29.191:8649

reducetask.sink.ganglia.servers=10.171.29.191:8649

2、配置hbase

hadoop-metrics.properties

# See http://wiki.apache.org/hadoop/GangliaMetrics

# Make sure you know whether you are using ganglia 3.0 or 3.1.

# If 3.1, you will have to patch your hadoop instance with HADOOP⑷675

# And, yes, this file is named hadoop-metrics.properties rather than

# hbase-metrics.properties because we're leveraging the hadoop metrics

# package and hadoop-metrics.properties is an hardcoded-name, at least

# for the moment.

#

# See also http://hadoop.apache.org/hbase/docs/current/metrics.html

# GMETADHOST_IP is the hostname (or) IP address of the server on which the ganglia

# meta daemon (gmetad) service is running

# Configuration of the "hbase" context for NullContextWithUpdateThread

# NullContextWithUpdateThread is a null context which has a thread calling

# periodically when monitoring is started. This keeps the data sampled

# correctly.

hbase.class=org.apache.hadoop.metrics.spi.NullContextWithUpdateThread

hbase.period=10

# Configuration of the "hbase" context for file

# hbase.class=org.apache.hadoop.hbase.metrics.file.TimeStampingFileContext

# hbase.fileName=/tmp/metrics_hbase.log

# HBase-specific configuration to reset long-running stats (e.g. compactions)

# If this variable is left out, then the default is no expiration.

hbase.extendedperiod = 3600

# Configuration of the "hbase" context for ganglia

# Pick one: Ganglia 3.0 (former) or Ganglia 3.1 (latter)

# hbase.class=org.apache.hadoop.metrics.ganglia.GangliaContext

hbase.class=org.apache.hadoop.metrics.ganglia.GangliaContext31

hbase.period=10

hbase.servers=10.171.29.191:8649

# Configuration of the "jvm" context for null

jvm.class=org.apache.hadoop.metrics.spi.NullContextWithUpdateThread

jvm.period=10

# Configuration of the "jvm" context for file

# jvm.class=org.apache.hadoop.hbase.metrics.file.TimeStampingFileContext

# jvm.fileName=/tmp/metrics_jvm.log

# Configuration of the "jvm" context for ganglia

# Pick one: Ganglia 3.0 (former) or Ganglia 3.1 (latter)

# jvm.class=org.apache.hadoop.metrics.ganglia.GangliaContext

jvm.class=org.apache.hadoop.metrics.ganglia.GangliaContext31

jvm.period=10

jvm.servers=10.171.29.191:8649

# Configuration of the "rpc" context for null

rpc.class=org.apache.hadoop.metrics.spi.NullContextWithUpdateThread

rpc.period=10

# Configuration of the "rpc" context for file

# rpc.class=org.apache.hadoop.hbase.metrics.file.TimeStampingFileContext

# rpc.fileName=/tmp/metrics_rpc.log

# Configuration of the "rpc" context for ganglia

# Pick one: Ganglia 3.0 (former) or Ganglia 3.1 (latter)

# rpc.class=org.apache.hadoop.metrics.ganglia.GangliaContext

rpc.class=org.apache.hadoop.metrics.ganglia.GangliaContext31

rpc.period=10

rpc.servers=10.171.29.191:8649

# Configuration of the "rest" context for ganglia

# Pick one: Ganglia 3.0 (former) or Ganglia 3.1 (latter)

# rest.class=org.apache.hadoop.metrics.ganglia.GangliaContext

rest.class=org.apache.hadoop.metrics.ganglia.GangliaContext31

rest.period=10

rest.servers=10.171.29.191:8649

重啟hadoop與hbase。

生活不易,碼農(nóng)辛苦

如果您覺得本網(wǎng)站對您的學(xué)習(xí)有所幫助,可以手機掃描二維碼進行捐贈