機(jī)器學(xué)習(xí) python實(shí)例完成―決策樹

來源:程序員人生 發(fā)布時(shí)間:2015-03-30 08:05:12 閱讀次數(shù):3844次

決策樹學(xué)習(xí)是利用最廣泛的歸納推理算法之1,是1種逼近離散值目標(biāo)函數(shù)的方法,在這類方法中學(xué)習(xí)到的函數(shù)被表示為1棵決策樹。決策樹可使用不熟習(xí)的數(shù)據(jù)集合,并從中提取出1系列規(guī)則,機(jī)器學(xué)習(xí)算法終究將使用這些從數(shù)據(jù)集中創(chuàng)造的規(guī)則。決策樹的優(yōu)點(diǎn)為:計(jì)算復(fù)雜度不高,輸出結(jié)果易于理解,對中間值的缺失不敏感,可以處理不相干特點(diǎn)數(shù)據(jù)。缺點(diǎn)為:可能產(chǎn)生過度匹配的問題。決策樹適于處理離散型和連續(xù)型的數(shù)據(jù)。

在決策樹中最重要的就是如何選取用于劃分的特點(diǎn)

在算法中1般選用ID3,D3算法的核心問題是選取在樹的每一個(gè)節(jié)點(diǎn)要測試的特點(diǎn)或?qū)傩裕Mx擇的是最有助于分類實(shí)例的屬性。如何定量地衡量1個(gè)屬性的價(jià)值呢?這里需要引入熵和信息增益的概念。熵是信息論中廣泛使用的1個(gè)度量標(biāo)準(zhǔn),刻畫了任意樣本集的純度。

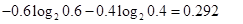

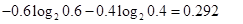

假定有10個(gè)訓(xùn)練樣本,其中6個(gè)的分類標(biāo)簽為yes,4個(gè)的分類標(biāo)簽為no,那熵是多少呢?在該例子中,分類的數(shù)目為2(yes,no),yes的幾率為0.6,no的幾率為0.4,則熵為 :

其中value(A)是屬性A所有可能值的集合, 是S中屬性A的值為v的子集

是S中屬性A的值為v的子集 ,即。上述公式的第1項(xiàng)為原集合S的熵,第2項(xiàng)是用A分類S后熵的期望值,該項(xiàng)描寫的期望熵就是每一個(gè)子集的熵的加權(quán)和,權(quán)值為屬于的樣本占原始樣本S的比例

,即。上述公式的第1項(xiàng)為原集合S的熵,第2項(xiàng)是用A分類S后熵的期望值,該項(xiàng)描寫的期望熵就是每一個(gè)子集的熵的加權(quán)和,權(quán)值為屬于的樣本占原始樣本S的比例 。所以Gain(S,

A)是由于知道屬性A的值而致使的期望熵減少。

。所以Gain(S,

A)是由于知道屬性A的值而致使的期望熵減少。

完全的代碼:

# -*- coding: cp936 -*-

from numpy import *

import operator

from math import log

import operator

def createDataSet():

dataSet = [[1,1,'yes'],

[1,1,'yes'],

[1,0,'no'],

[0,1,'no'],

[0,1,'no']]

labels = ['no surfacing','flippers']

return dataSet, labels

def calcShannonEnt(dataSet):

numEntries = len(dataSet)

labelCounts = {} # a dictionary for feature

for featVec in dataSet:

currentLabel = featVec[⑴]

if currentLabel not in labelCounts.keys():

labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1

shannonEnt = 0.0

for key in labelCounts:

#print(key)

#print(labelCounts[key])

prob = float(labelCounts[key])/numEntries

#print(prob)

shannonEnt -= prob * log(prob,2)

return shannonEnt

#依照給定的特點(diǎn)劃分?jǐn)?shù)據(jù)集

#根據(jù)axis等于value的特點(diǎn)將數(shù)據(jù)提出

def splitDataSet(dataSet, axis, value):

retDataSet = []

for featVec in dataSet:

if featVec[axis] == value:

reducedFeatVec = featVec[:axis]

reducedFeatVec.extend(featVec[axis+1:])

retDataSet.append(reducedFeatVec)

return retDataSet

#選取特點(diǎn),劃分?jǐn)?shù)據(jù)集,計(jì)算得出最好的劃分?jǐn)?shù)據(jù)集的特點(diǎn)

def chooseBestFeatureToSplit(dataSet):

numFeatures = len(dataSet[0]) - 1 #剩下的是特點(diǎn)的個(gè)數(shù)

baseEntropy = calcShannonEnt(dataSet)#計(jì)算數(shù)據(jù)集的熵,放到baseEntropy中

bestInfoGain = 0.0;bestFeature = ⑴ #初始化熵增益

for i in range(numFeatures):

featList = [example[i] for example in dataSet] #featList存儲對應(yīng)特點(diǎn)所有可能得取值

uniqueVals = set(featList)

newEntropy = 0.0

for value in uniqueVals:#下面是計(jì)算每種劃分方式的信息熵,特點(diǎn)i個(gè),每一個(gè)特點(diǎn)value個(gè)值

subDataSet = splitDataSet(dataSet, i ,value)

prob = len(subDataSet)/float(len(dataSet)) #特點(diǎn)樣本在總樣本中的權(quán)重

newEntropy = prob * calcShannonEnt(subDataSet)

infoGain = baseEntropy - newEntropy #計(jì)算i個(gè)特點(diǎn)的信息熵

#print(i)

#print(infoGain)

if(infoGain > bestInfoGain):

bestInfoGain = infoGain

bestFeature = i

return bestFeature

#如上面是決策樹所有的功能模塊

#得到原始數(shù)據(jù)集以后基于最好的屬性值進(jìn)行劃分,每次劃分以后傳遞到樹分支的下1個(gè)節(jié)點(diǎn)

#遞歸結(jié)束的條件是程序遍歷完成所有的數(shù)據(jù)集屬性,或是每個(gè)分支下的所有實(shí)例都具有相同的分類

#如果所有實(shí)例具有相同的分類,則得到1個(gè)葉子節(jié)點(diǎn)或終止快

#如果所有屬性都已被處理,但是類標(biāo)簽仍然不是肯定的,那末采取多數(shù)投票的方式

#返回出現(xiàn)次數(shù)最多的分類名稱

def majorityCnt(classList):

classCount = {}

for vote in classList:

if vote not in classCount.keys():classCount[vote] = 0

classCount[vote] += 1

sortedClassCount = sorted(classCount.iteritems(),key=operator.itemgetter(1), reverse=True)

return sortedClassCount[0][0]

#創(chuàng)建決策樹

def createTree(dataSet,labels):

classList = [example[⑴] for example in dataSet]#將最后1行的數(shù)據(jù)放到classList中,所有的種別的值

if classList.count(classList[0]) == len(classList): #種別完全相同不需要再劃分

return classList[0]

if len(dataSet[0]) == 1:#這里為何是1呢?就是說特點(diǎn)數(shù)為1的時(shí)候

return majorityCnt(classList)#就返回這個(gè)特點(diǎn)就好了,由于就這1個(gè)特點(diǎn)

bestFeat = chooseBestFeatureToSplit(dataSet)

print('the bestFeatue in creating is :')

print(bestFeat)

bestFeatLabel = labels[bestFeat]#運(yùn)行結(jié)果'no surfacing'

myTree = {bestFeatLabel:{}}#嵌套字典,目前value是1個(gè)空字典

del(labels[bestFeat])

featValues = [example[bestFeat] for example in dataSet]#第0個(gè)特點(diǎn)對應(yīng)的取值

uniqueVals = set(featValues)

for value in uniqueVals: #根據(jù)當(dāng)前特點(diǎn)值的取值進(jìn)行下1級的劃分

subLabels = labels[:]

myTree[bestFeatLabel][value] = createTree(splitDataSet(dataSet,bestFeat,value),subLabels)

return myTree

#對上面簡單的數(shù)據(jù)進(jìn)行小測試

def testTree1():

myDat,labels=createDataSet()

val = calcShannonEnt(myDat)

print 'The classify accuracy is: %.2f%%' % val

retDataSet1 = splitDataSet(myDat,0,1)

print (myDat)

print(retDataSet1)

retDataSet0 = splitDataSet(myDat,0,0)

print (myDat)

print(retDataSet0)

bestfeature = chooseBestFeatureToSplit(myDat)

print('the bestFeatue is :')

print(bestfeature)

tree = createTree(myDat,labels)

print(tree)

對應(yīng)的結(jié)果是:

>>> import TREE

>>> TREE.testTree1()

The classify accuracy is: 0.97%

[[1, 1, 'yes'], [1, 1, 'yes'], [1, 0, 'no'], [0, 1, 'no'], [0, 1, 'no']]

[[1, 'yes'], [1, 'yes'], [0, 'no']]

[[1, 1, 'yes'], [1, 1, 'yes'], [1, 0, 'no'], [0, 1, 'no'], [0, 1, 'no']]

[[1, 'no'], [1, 'no']]

the bestFeatue is :

0

the bestFeatue in creating is :

0

the bestFeatue in creating is :

0

{'no surfacing': {0: 'no', 1: {'flippers': {0: 'no', 1: 'yes'}}}}

最好再增加使用決策樹的分類函數(shù)

同時(shí)由于構(gòu)建決策樹是非常耗時(shí)間的,由于最好是將構(gòu)建好的樹通過 python 的 pickle 序列化對象,將對象保存在

磁盤上,等到需要用的時(shí)候再讀出

def classify(inputTree,featLabels,testVec):

firstStr = inputTree.keys()[0]

secondDict = inputTree[firstStr]

featIndex = featLabels.index(firstStr)

key = testVec[featIndex]

valueOfFeat = secondDict[key]

if isinstance(valueOfFeat, dict):

classLabel = classify(valueOfFeat, featLabels, testVec)

else: classLabel = valueOfFeat

return classLabel

def storeTree(inputTree,filename):

import pickle

fw = open(filename,'w')

pickle.dump(inputTree,fw)

fw.close()

def grabTree(filename):

import pickle

fr = open(filename)

return pickle.load(fr)

生活不易,碼農(nóng)辛苦

如果您覺得本網(wǎng)站對您的學(xué)習(xí)有所幫助,可以手機(jī)掃描二維碼進(jìn)行捐贈

是S中屬性A的值為v的子集

是S中屬性A的值為v的子集 ,即。上述公式的第1項(xiàng)為原集合S的熵,第2項(xiàng)是用A分類S后熵的期望值,該項(xiàng)描寫的期望熵就是每一個(gè)子集的熵的加權(quán)和,權(quán)值為屬于的樣本占原始樣本S的比例

,即。上述公式的第1項(xiàng)為原集合S的熵,第2項(xiàng)是用A分類S后熵的期望值,該項(xiàng)描寫的期望熵就是每一個(gè)子集的熵的加權(quán)和,權(quán)值為屬于的樣本占原始樣本S的比例![]() 。所以Gain(S,

A)是由于知道屬性A的值而致使的期望熵減少。

。所以Gain(S,

A)是由于知道屬性A的值而致使的期望熵減少。