集群安裝ubuntu12.04 64bit系統(tǒng),配置各結(jié)點(diǎn)IP地址

開(kāi)啟ssh服務(wù),方便以后遠(yuǎn)程登錄,命令sudo apt-get install openssh-server(無(wú)需重啟)

使用命令:ssh hadoop@192.168.0.125測(cè)試服務(wù)連接是否正常

設(shè)置無(wú)密鑰登錄:

sudo

vim /etc/hostname將各主機(jī)設(shè)置成相應(yīng)的名字,如mcmaster、node1、node2...sudo

vim /etc/hosts,建立master和各結(jié)點(diǎn)之間的ip和hostname映射關(guān)系:

127.0.0.1 localhost

192.168.0.125 master

192.168.0.126 node2

192.168.0.127 node3cd

~/.sshssh-keygen

-t rsa之后一路回車(產(chǎn)生密鑰)cat

id_rsa.pub >> authorized_keysservice

ssh restart設(shè)置遠(yuǎn)程無(wú)密碼登錄:由于有node2~node3多個(gè)子節(jié)點(diǎn),所以需要將master的公鑰添加到各結(jié)點(diǎn)的authorized_keys中去,以實(shí)現(xiàn)master到各slave的無(wú)密鑰登錄。

進(jìn)入master的.ssh目錄,執(zhí)行scp authorized_keys hadoop@node2:~/.ssh/authorized_keysmc_from_master

進(jìn)入node2的.ssh目錄,執(zhí)行cat authorized_keys_from_master >> authorized_keys

至此,可以在master上執(zhí)行ssh node2進(jìn)行無(wú)密碼登錄了,其他結(jié)點(diǎn)操作相同

安裝jdk,這里需要手動(dòng)下載sun的jdk,不使用源中的openJDK.由于使用的是64bit ubuntu,所以需要下載64bit的jdkhttp://www.oracle.com/technetwork/java/javase/downloads/jdk7-downloads-1880260.html

將jdk解壓到文件夾/usr/lib/jvm/jdk1.7.0

打開(kāi)/etc/profile,追加如下信息:

export JAVA_HOME=/usr/lib/jvm/jdk1.7.0

export CLASSPATH=$CLASSPATH:$JAVA_HOME/lib:$JAVA_HOME/jre/lib

export PATH=$JAVA_HOME/bin:$JAVA_HOME/jre/bin:$PATH

執(zhí)行命令source /etc/profile使環(huán)境變量生效

每個(gè)結(jié)點(diǎn)均如此設(shè)置,使用java -version進(jìn)行驗(yàn)證

關(guān)閉防火墻:ubuntu系統(tǒng)的iptables默認(rèn)是關(guān)閉的,可通過(guò)命令sudo ufw status查看防火墻狀態(tài)

編譯hadoop-2.2.0 64bit.由于apache官網(wǎng)提供的hadoop可執(zhí)行文件是32bit對(duì)于64bit的按照文件則需要用戶手動(dòng)編譯生成。

這些庫(kù)和包在編譯過(guò)程中都會(huì)用到,缺少的話會(huì)影響編譯,因此首先從源里將它們直接裝上

sudo apt-get install g++ autoconf automake libtool cmake zlib1g-dev

pkg-config libssl-dev另外還需要使用最新的2.5.0版本的protobuf:https://code.google.com/p/protobuf/downloads/list,解壓,依次執(zhí)行如下命令安裝

$ ./configure --prefix=/usr

$ sudo make

$ sudo make check

$ sudo make install執(zhí)行如下命令檢查一下版本:

$ protoc --version

libprotoc 2.5.0

安裝配置maven:直接使用apt-get從源中安裝 sudo apt-get install maven

編譯hadoop-2.2.0:進(jìn)入如下頁(yè)面下載hadoop-2.2.0源代碼http://apache.fayea.com/apache-mirror/hadoop/common/hadoop-2.2.0/

解壓到用戶目錄/home/hadoop/Downloads/ 進(jìn)入hadoop-2.2.0執(zhí)行編譯:

$ tar -vxzf hadoop-2.2.0-src.tar.gz

$ cd hadoop-2.2.0-src

$ mvn package -Pdist,native -DskipTests -Dtar編譯過(guò)程中maven會(huì)自動(dòng)解決依賴,編譯完成后,系統(tǒng)會(huì)提示一下信息:

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------

[INFO] Total time: 15:39.705s

[INFO] Finished at: Fri Nov 01 14:36:17 CST 2013

[INFO] Final Memory: 135M/422M然后在以下目錄中可以獲取編譯完成的libhadoop:

hadoop-2.2.0-src/hadoop-dist/target/hadoop-2.2.0出現(xiàn)問(wèn)題:

[ERROR] COMPILATION ERROR :

[INFO] -------------------------------------------------------------

[ERROR] /home/hduser/code/hadoop-2.2.0-src/hadoop-common-project/hadoopauth/src/test/java/org/apache/hadoop/security/authentication/client/AuthenticatorTestCase.java:[88

,11] error: cannot access AbstractLifeCycle

[ERROR] class file for org.mortbay.component.AbstractLifeCycle not found

/home/hduser/code/hadoop-2.2.0-src/hadoop-common-project/hadoopauth/src/test/java/org/apache/hadoop/security/authentication/client/AuthenticatorTestCase.java:[96

,29] error: cannot access LifeCycle

[ERROR] class file for org.mortbay.component.LifeCycle not found解決方案:需要編輯hadoop-common-project/hadoop-auth/pom.xml文件,添加以下依賴:

<dependency>

<groupId>org.mortbay.jetty</groupId>

<artifactId>jetty-util</artifactId>

<scope>test</scope>

</dependency>再次執(zhí)行命令編譯即可。

在mcmaster上建立Cloud文件夾,并將hadoop-2.2.0拷貝到該文件夾下

$ cd ~

$ mkdir Cloud

$ cp ....編輯~/.bashrc文件,加入如下內(nèi)容:

export HADOOP_PREFIX="/home/hadoop/Cloud/hadoop-2.2.0"

export PATH=$PATH:$HADOOP_PREFIX/bin

export PATH=$PATH:$HADOOP_PREFIX/sbin

export HADOOP_MAPRED_HOME=${HADOOP_PREFIX}

export HADOOP_COMMON_HOME=${HADOOP_PREFIX}

export HADOOP_HDFS_HOME=${HADOOP_PREFIX}

export YARN_HOME=${HADOOP_PREFIX}保存退出,然后source ~/.bashrc,使之生效

需要修改如下文件:

core-site.xml: hadoop core的配置項(xiàng),例如hdfs和mapreduce常用的I/O設(shè)置等

mapred-site.xml: mapreduce守護(hù)進(jìn)程的配置項(xiàng),包括jobtracker和tasktracker

hdfs-site.xml: hdfs守護(hù)進(jìn)程配置項(xiàng)

yarn-site.xml: yarn守護(hù)進(jìn)程配置項(xiàng)

masters: 記錄運(yùn)行輔助namenode的機(jī)器列表

slaves: 記錄運(yùn)行datanode和tasktracker的機(jī)器列表

在hadoop-2.2.0/etc/hadoop目錄中依次編輯如上所述的配置文件,若未找到mapred-site.xml文件,可自行創(chuàng)建,其中core-site.xml、mapred-site.xml、hdfs-site.xml、yarn-site.xml為配置文件:

core-site.xml:

<configuration>

<property>

<name>io.native.lib.avaliable</name>

<value>true</value>

</property>

<property>

<name>fs.default.name</name>

<value>hdfs://mcmaster:9000</value>

<final>true</final>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/hadoop/Cloud/workspace/tmp</value>

</property>

</configuration>hdfs-site.xml:

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/home/hadoop/Cloud/workspace/hdfs/data</value>

<final>true</final>

</property>

<property>

<name>dfs.namenode.dir</name>

<value>/home/hadoop/Cloud/workspace/hdfs/name</value>

</property>

<property>

<name>dfs.datanode.dir</name>

<value>/home/hadoop/Cloud/workspace/hdfs/data</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

</configuration>mapred-site.xml:

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.job.tracker</name>

<value>hdfs://mcmaster:9001</value>

<final>true</final>

</property>

<property>

<name>mapreduce.map.memory.mb</name>

<value>1536</value>

</property>

<property>

<name>mapreduce.map.java.opts</name>

<value>-Xmx1024M</value>

</property>

<property>

<name>mapreduce.reduce.memory.mb</name>

<value>3072</value>

</property>

<property>

<name>mapreduce.reduce.java.opts</name>

<value>-Xmx2560M</value>

</property>

<property>

<name>mapreduce.task.io.sort.mb</name>

<value>512</value>

</property>

<property>

<name>mapreduce.task.io.sort.factor</name>

<value>100</value>

</property>

<property>

<name>mapreduce.reduce.shuffle.parallelcopies</name>

<value>50</value>

</property>

<property>

<name>mapreduce.system.dir</name>

<value>/home/hadoop/Cloud/workspace/mapred/system</value>

<final>true</final>

</property>

<property>

<name>mapreduce.local.dir</name>

<value>/home/hadoop/Cloud/workspace/mapred/local</value>

<final>true</final>

</property>

</configuration>yarn-site.xml:

<configuration>

<property>

<name>yarn.resourcemanager.address</name>

<value>mcmaster:8080</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>mcmaster:8081</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>mcmaster:8082</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

</configuration>slaves:

node2

node3在hadoop-2.2.0/etc/hadoop目錄下的hadoop-env.sh中添加如下內(nèi)容,另外在yarn-env.sh中填充相同的內(nèi)容:

export HADOOP_PREFIX=/home/hadoop/Cloud/hadoop-2.2.0

export HADOOP_COMMON_HOME=${HADOOP_PREFIX}

export HADOOP_HDFS_HOME=${HADOOP_PREFIX}

export PATH=$PATH:$HADOOP_PREFIX/bin

export PATH=$PATH:$HADOOP_PREFIX/sbin

export HADOOP_MAPRED_HOME=${HADOOP_PREFIX}

export YARN_HOME=${HADOOP_PREFIX}

export HADOOP_CONF_HOME=${HADOOP_PREFIX}/etc/hadoop

export YARN_CONF_DIR=${HADOOP_PREFIX}/etc/hadoop

export JAVA_HOME=/usr/lib/jvm/jdk1.7.0將配置完成的hadoop分發(fā)到各結(jié)點(diǎn):

$ scp ~/Cloud/hadoop-2.2.0 node2:~/Cloud/

$ ...進(jìn)入mcmaster的hadoop根目錄下,格式化namenode,隨后啟動(dòng)集群:

$ cd hadoop-2.2.0

$ bin/hdfs namenode -format

$ sbin/start-all.sh

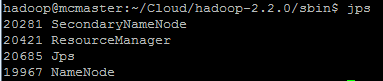

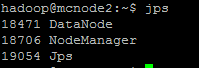

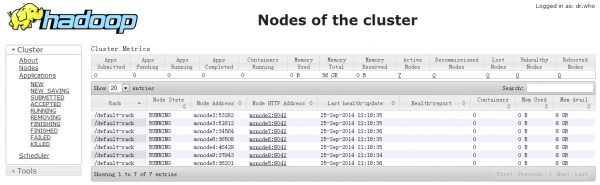

可以使用jps命令產(chǎn)看守護(hù)進(jìn)程是否啟動(dòng)

WordCount是最簡(jiǎn)單的mapreduce測(cè)試程序,該程序的完整代碼可以在hadoop安裝包的"src/examples"目錄下找到,測(cè)試步驟如下:

創(chuàng)建本地示例文件

在/home/hadoop/Downloads/下創(chuàng)建兩個(gè)示例文件test1.txt和test2.txt

$ cd ~/Downloads

$ echo "hello hadoop" > test1.txt

$ echo "hello world" > test2.txt在HDFS上創(chuàng)建輸入文件夾

hadoop fs -mkdir /input上傳本地文件到集群的input目錄

hadoop fs -put test1.txt /input

hadoop fs -put test2.txt /input hadoop jar ~/Cloud/hadoop-2.2.0/share/mapreduce/hadoop-mapreduce-examples-2.2.0.jar wordcount /input /output